Siqi Li

PhD Student · UC Irvine EECS · AI Alignment, Robustness & VLA Systems

Engineering Hall 308

Irvine, CA 92697

"Used generative AI to turn myself into Link. Looks cool, but also a great reminder that AI reliability is still very much a problem."

Hi! I’m Siqi 👋

I work on AI robustness and alignment, with a particular interest in what happens when intelligent systems leave clean benchmarks and enter the real world.

My research asks a simple but uncomfortable question:

how do we know an AI system is doing the right thing when it fails quietly, faces distribution shift, or is intentionally attacked?

While much of robustness research is mathematical, I approach the problem both theoretically and engineering-first. I build systems, stress them, break them, and then ask what guarantees actually survive deployment.

I’m a PhD student in EECS at UC Irvine, advised by

Prof. Yasser Shoukry in the

Resilient Cyber-Physical Systems Lab.

Previously, I was a visiting researcher at Caltech, working with

Prof. John Doyle on language-to-robot control.

How I Think About Robustness

I’m especially interested in robustness for vision-language-action systems, where failures are subtle, delayed, and hard to detect.

My work spans:

- Formal and provable guarantees (e.g., adversarial detection with correctness proofs)

- System-level verification for VLA-based robot policies

- Engineering-heavy evaluation pipelines that expose real failure modes

I care less about making models look good on paper — and more about making failure visible, interpretable, and actionable.

Building Real Systems (and Breaking Them)

Beyond research prototypes, I enjoy building interactive systems that force models to operate under realistic constraints.

I’ve worked on:

- Language-to-robot control systems that integrate learning, planning, and feedback

- VR and game-like environments (including Meta Quest–style setups) to study perception, interaction, and failure in embodied agents

- Simulation-driven stress tests that reveal where “robust” models actually collapse

Game engines and VR are especially useful here — they let us design controlled worlds where failures are unavoidable, observable, and repeatable.

A Bit More About Me 🌍

I work fluently in three languages (Mandarin, English, and German), both conversationally and academically, and I’ve lived and studied across Asia, Europe, and the US.

I enjoy travel, food, and building things that work — which is probably why I’m drawn to problems where theory meets reality.

I believe the most interesting AI research is:

- rigorous but not fragile

- principled but hands-on

- serious, yet a little fun

If that resonates, feel free to reach out — I’m always happy to chat.

§1 Recent Updates

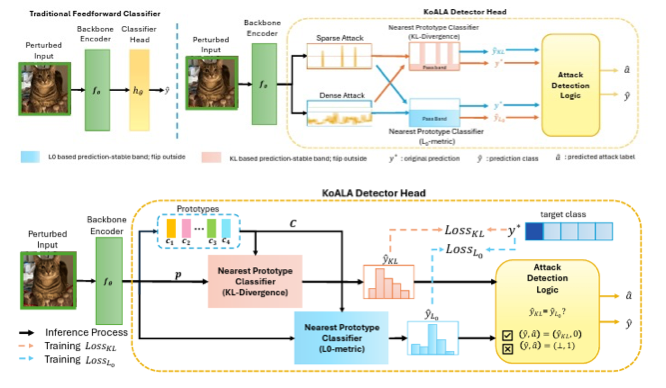

| Jan 15, 2025 | ICLR 2026 Submission — Submitted KoALA, our adversarial detection method with formal guarantees. We prove detection bounds for vision-language models under attack. |

|---|---|

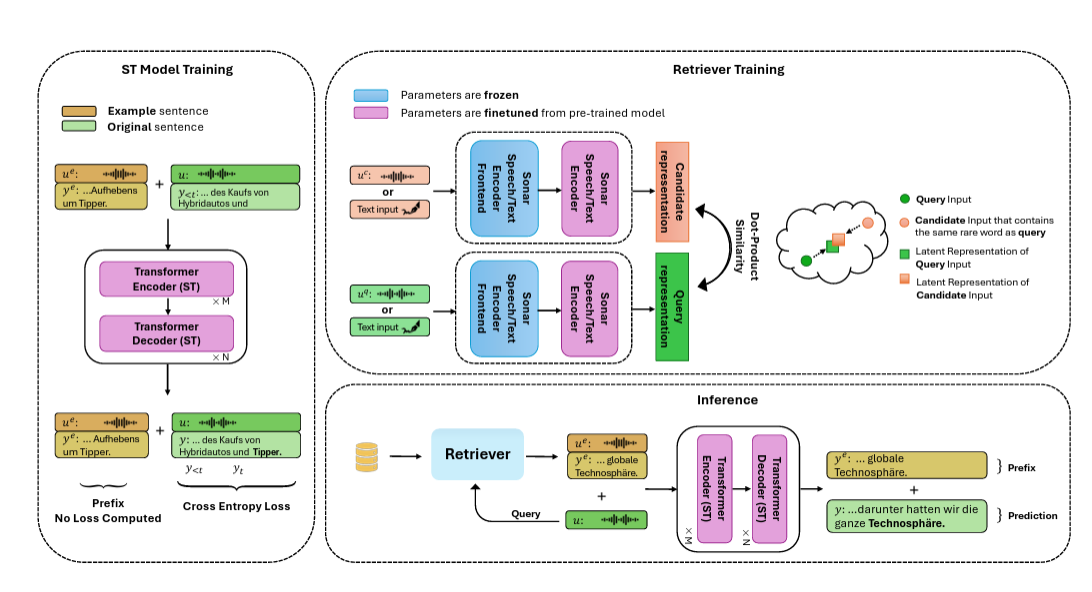

| Nov 15, 2024 | EMNLP 2024 — Our paper on retrieval-augmented speech translation was accepted! Cross-modal retrieval improves rare word accuracy by 17.6%. |

| Sep 01, 2024 | Started my PhD at UC Irvine, joining the Resilient Cyber-Physical Systems Lab. Grateful for the Graduate Dean’s Recruitment Fellowship! |

§2 Selected Publications

- arXiv

KoALA: KL-L0 Adversarial Detector via Label AgreementarXiv preprint arXiv:2510.12752, 2025Under review at ICLR 2026TL;DR: A provably-guaranteed adversarial detector combining KL divergence with L0-based similarity — flags inputs when two heads predict different labels.

KoALA: KL-L0 Adversarial Detector via Label AgreementarXiv preprint arXiv:2510.12752, 2025Under review at ICLR 2026TL;DR: A provably-guaranteed adversarial detector combining KL divergence with L0-based similarity — flags inputs when two heads predict different labels. - EMNLP

Optimizing Rare Word Accuracy in Direct Speech Translation with a Retrieval-and-Demonstration ApproachIn Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, 2024TL;DR: Cross-modal retrieval enables speech translation models to look up rare words during inference, improving tail vocabulary accuracy by 17.6%.

Optimizing Rare Word Accuracy in Direct Speech Translation with a Retrieval-and-Demonstration ApproachIn Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, 2024TL;DR: Cross-modal retrieval enables speech translation models to look up rare words during inference, improving tail vocabulary accuracy by 17.6%. - CVCI

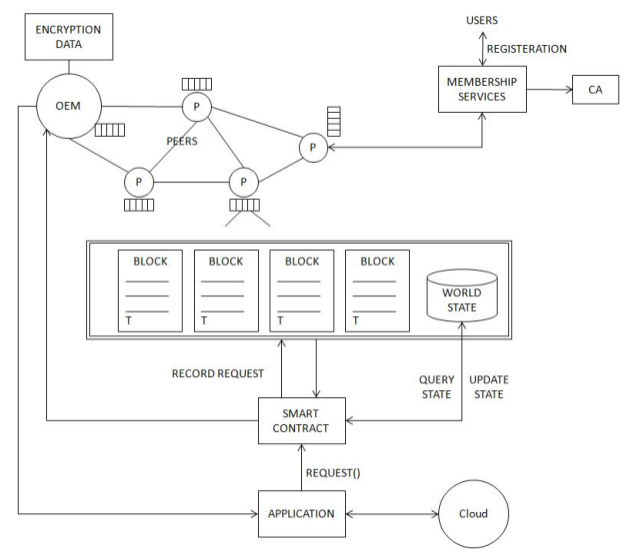

The Data Protection of Intelligent Connected Vehicles Cloud Control Framework Using Fully Homomorphic EncryptionIn 2020 4th CAA International Conference on Vehicular Control and Intelligence (CVCI), 2020Equal contributionTL;DR: Privacy-preserving cloud control for intelligent vehicles using fully homomorphic encryption.

The Data Protection of Intelligent Connected Vehicles Cloud Control Framework Using Fully Homomorphic EncryptionIn 2020 4th CAA International Conference on Vehicular Control and Intelligence (CVCI), 2020Equal contributionTL;DR: Privacy-preserving cloud control for intelligent vehicles using fully homomorphic encryption.